From AI coding to AI coding

(midwest.social)

(midwest.social)

I check it out once in a while to see what's going on, and the OpenAI people apparently seemed to try to fix the sycophancy problem by turning it into an insufferable pedant.

"I want to check... you should put pants on before leaving the house, right?"

"That's not exactly right. Putting pants on involves putting your legs into the leg holes in the pants. After that you should zip and button any zips and buttons on your pants."

"Sir, you seem to be low on vitamin C, which gave you scurvy, but Grok says that it is more likely to be an psychosomatic response to an internal conflict between the way you live your life, and the Hitler inside you waiting to be let out"

Reid Hoffman: the original LinkedIn Lunatic

Hey Hoffman, remember the sneezes you had in succession last winter for 2 weeks straight? I asked chatgpt and tells me is brain cancer. Are you going to start cancer therapy ASAP?

PS: for the people that still remember WebMD at the start, they would never trust a machine for full diagnosis, let alone considering this as an option

I hope his doctor does ask Chat GPT and it prescribes him a penectomy.

Another one?

He needs help to care for more people than just himself.

Older adults have always made a fool of themselves when new tech comes out as they scramble over each other to either voice dramatically out-of-touch opinions or avoid it entirely while preaching moral panic. This time around with AI, there's not a big financial barrier to access so the youth are equally in on the game.

Context: Coding in medical nomenclature refers to code blue (life threatening emergency)

This shit is bananas

Your comment just caused some who needs FMT to receive bananas instead.

Y'all will screech but having a giant ass search engine that is able to process patient data in context is incredibly useful.

Y'all are just prejudice. Professionals will be using these tools and they already have been providing excellent real world results. Honestly, if you don't understand how the medical community is using AI/ml with real validated results, you should be keeping your mouth shut on the topic.

having a giant ass search engine that is able to process patient data in context is incredibly useful.

Yeah. Wouldn’t that be nice. That’s not what an LLM is, however.

Why did you assume AI = only LLM? LLMs are just one type of AI, and not the type most often used in medicine

Reid Hoffman is one of those lunatics who thinks LLMs are intelligent. His entire thing is peddling that shit.

LLMs being “search engines” is also a really popular misconception.

I didn't know the guy, I was just addressing the general point of using AI in medicine tbh 🤷♀️

You're completely correct that LLMs are not search engines, of course

~~So then I guess you shouldn't have lead with:~~

~~> but having a giant ass search engine~~

~~Say what you mean and mean what you say or you're just spouting shit that no one will listen to.~~

that wasn't me?! I was just replying to the bit about conflating AI with LLMs, like I was saying 😭

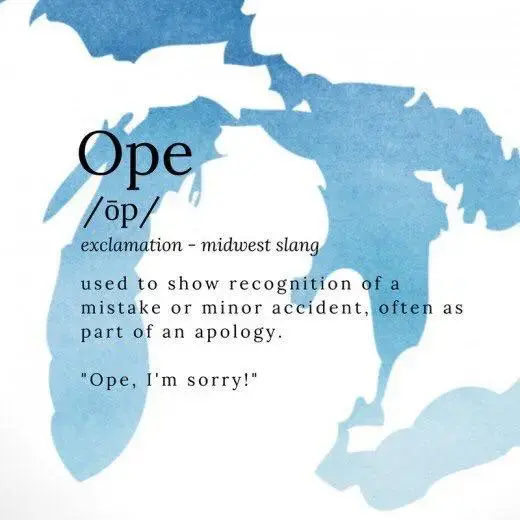

Oh jeeze, my apologies, poor attention paying on my part. Please carry on.

👌👍

Hmmm a 1 day-old account rushing in to defend inappropriate LLM use 🤔

You can only get banned for an opinion so much before moving on to the next one 🤷♂️.

Hi Reid

If LLMs didn't hallucinate I'd fully agree with you

Which is why your doctor should use it as a tool and validate the results. You know, do their job.

Y'all are just fucking binary. How do you think medical and community members work now? They use a shitty search engine or portal to look up material, and yes, some of it will be garbage they need to wade through.

But God forbid they have a tool that puts that information into a cited overview to supplement a tricky diagnosis. The prejudice and fake workflows that y'all invent is crazy. Looking for little edge cases everywhere catching the AI in a mistake

I have no problem with them using search engines. They can vet and choose answers from reliable sources. From an LLM, it's anybody's guess if anything it pulled up is correct, and a less experienced doctor could be misled into making a dangerous mistake.

Riiiiiiiiiight, LLMs don't cite sources and the portals written in the 90s for journals solve all of that.

It's so amazing to watch you all invent these crazy scenarios, where you've chosen the absolute lowest bar you can find. As if some layman who has no clue how to use this tool is working on some free Claude account because you read about one shitty doctor or lawyer fucking up. It's honestly sad seeing these hoops to jump through.

Professional tools, run by some of the most educated type a professionals in the planet minimize and reduce these risks by providing defaults and interfaces along with education.

FFS they can (and will) kill you accidentally with far more simple shit that can't be mostly mitigated away. But yeah, because LLMs can be used poorly by morons it's worthless 🙄.

When was the last time you used them? They can provide sources for pretty much everything they say and that source usually also contains said thing too.

But even if not, even back 2 years ago, it was already good because you had a second look, a different perspective. A medical professional can either know little about everything or much about next to nothing. It should be really obvious how such a tool can help, even if it can not reach expert level.

"Don't worry, when you ask it for sources it gives you some. Sometimes they are even real! And sometimes the real ones even say the thing they were supposed to have said from the AI!"

Fucking lunacy.

Using AI as a tool to find additional information? Sure, could be doable maybe.

Asking sycophantic ”you’re absolutely correct” machines for second opinion? Absolutely not!

Hoffman is advocating for the latter.

Imagine believing that they'll use general purpose free chatgpt. Just amazing these scenarios you all invent. I can't tell if it's just straight blind prejudice or you all really don't understand how it can integrate into tooling with very specific models.

Just wild what people have cooked up in their mental model.

Matrix chat room: https://matrix.to/#/#midwestsociallemmy:matrix.org

Communities from our friends:

LiberaPay link: https://liberapay.com/seahorse